Classifying Human Emotions from Facial Images

Facial Emotion Recognition using TensorFlow and InceptionV3

Christopher A. Murphy, Nishad Vinayek, John Zumel

Fall Term, 2024

Project Overview

This project explores the development of a deep learning model capable of recognizing human facial emotions from grayscale images. It applied data preprocessing, class rebalancing, transfer learning and hyperparameter tuning with pre-trained InceptionV3 to classify seven emotions.

Problem Statement

The objective was to create a robust image classification model that could identify emotions - anger, fear, happiness, sadness, surprise, neutral, and disgust - from facial expressions. The task is complicated by the subtlety of emotional cues, imbalanced training data, and limitations in image resolution and quality.

Methods

Tools: Python, Google Colab, Hardware Acceleration, TensorFlow, Keras

Architecture: InceptionV3 with frozen base layers, global average pooling, dropout (0.5), and a dense softmax output layer (7 classes). Adam (1e-4), categorical cross-entropy loss.

Training: 300 epochs with early stopping and validation monitoring.

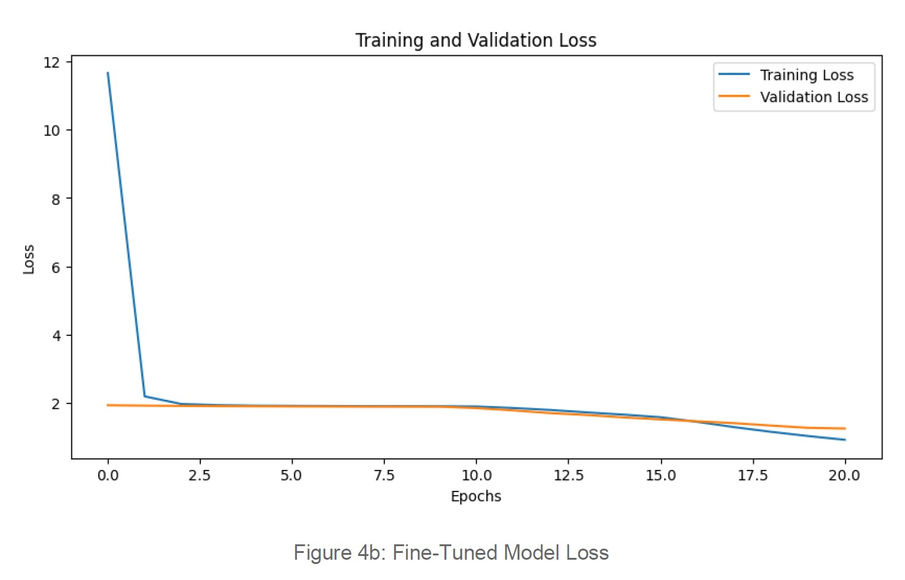

Fine-Tuning: Top fifty layers of InceptionV3 unfrozen, 1e-5 learning rate, dropout (0.5)

Class Imbalance: augmentation for minority classes and removed "disgust" class.

Key Learnings

Although transfer learning is often seen as a shortcut to higher accuracy, this project surfaced several critical lessons about its limitations:

Pretrained model mismatch:

InceptionV3 learned RGB natural-image features, while FER-2013 is low-res grayscale; triplicating channels adds no color, so transfer works poorly.

Domain Specific Pre-Training Matters

Facial-pretrained models (e.g., VGG-Face, EmotionNet) would transfer better.

It's Often about the Data, Not the Model

The real bottleneck was the quality of input data.

Poor resolution, lighting, and class imbalance limited performance regardless of architecture.

Visualizations

Competencies Employed

Exploratory Data Analysis (EDA)

Discovering patterns and relationships in data using visual and statistical techniques.

Python Coding

Developing data pipelines, machine learning models, and automation tools using Python’s data science ecosystem (e.g., pandas, scikit-learn, TensorFlow)

Deep Learning

Applying neural networks for tasks like image classification and NLP.

Strategy & Decisions

Developing alternative strategies based on the data analysis.

Python Environment Management

Creating data science environments using Python.

Insights & Recommendations

Turn analysis into clear, prioritized stakeholder actions with rationale, trade-offs, and measurable outcomes.

Data Collection

Acquire, ingest, validate, and organize data using reproducible workflows and transformations to ensure compatibility with downstream data-science algorithms.

Neural Networking

Design, train, and tune deep-learning models for vision, language, and prediction tasks, with attention to data preparation, hyper-parameter optimization, model evaluation and serialization.

Descriptive Analytics

Summarizing and interpreting historical data to identify patterns, trends, and insights that inform decision-making and business understanding.

Predictive Modeling

Using statistical or machine learning models to make future predictions.

Data Engineering

Designing and implementing systems for data collection, storage, and access.

Communication

Succinctly communicate complicated technical concepts.

Data Visualization

Presenting data insights clearly using charts, dashboards, and visual storytelling.

Project Management

Planning, executing, and managing data science projects across teams and phases.

Visual Analytics

Designing interactive tools that allow users to explore and analyze data visually.

Scripting for Analysis

Automating data processes and analysis using scripting languages like Python or R.

Cloud & Scalable Computing

Leveraging cloud platforms and parallel computing for large-scale data tasks.

Data Management

Managing structured and unstructured data using databases and data warehouses.

Additional Technical Information

Data Source(s)

The project used the publicly available FER-2013 dataset from Kaggle, containing 35,887 48x48 pixel grayscale facial images across seven emotion categories.

Results Summary

Model Variant | Validation Accuracy | Test Accuracy |

Initial InceptionV3 Model | 44.14% | 44.14% |

Fine-Tuned Model | 58.22% | 49.00% |

Without "Disgust" Class | 67.98% | 48.66% |

Misclassifications were common between “neutral” vs. “sad,” and “fear” vs. “surprise.”

The “happy” class was consistently most accurately predicted.

Future Considerations

Use grayscale-tailored CNN or facial-specific pretrained models and consider ensembles or attention to capture nuanced expressions.

Apply super-resolution (ESRGAN/SR3) and self-supervised pretraining on unlabeled faces to boost detail and feature quality.